The quantum AI that predicts chaos and changes who controls scientific computing

Predicting fluid turbulence with sustained precision over time is one of the most computationally expensive problems in physics. The Navier-Stokes equations have resisted efficient solutions for more than a century, and classical AI models fail over long time horizons because they accumulate errors in a systematic way. On April 17, 2026, researchers at University College London published a result in Science Advances that deserves to be read carefully: an AI model trained on data preprocessed by a 20-qubit quantum computer achieved 20% greater accuracy in predicting chaotic systems and required hundreds of times less memory than equivalent classical approaches.

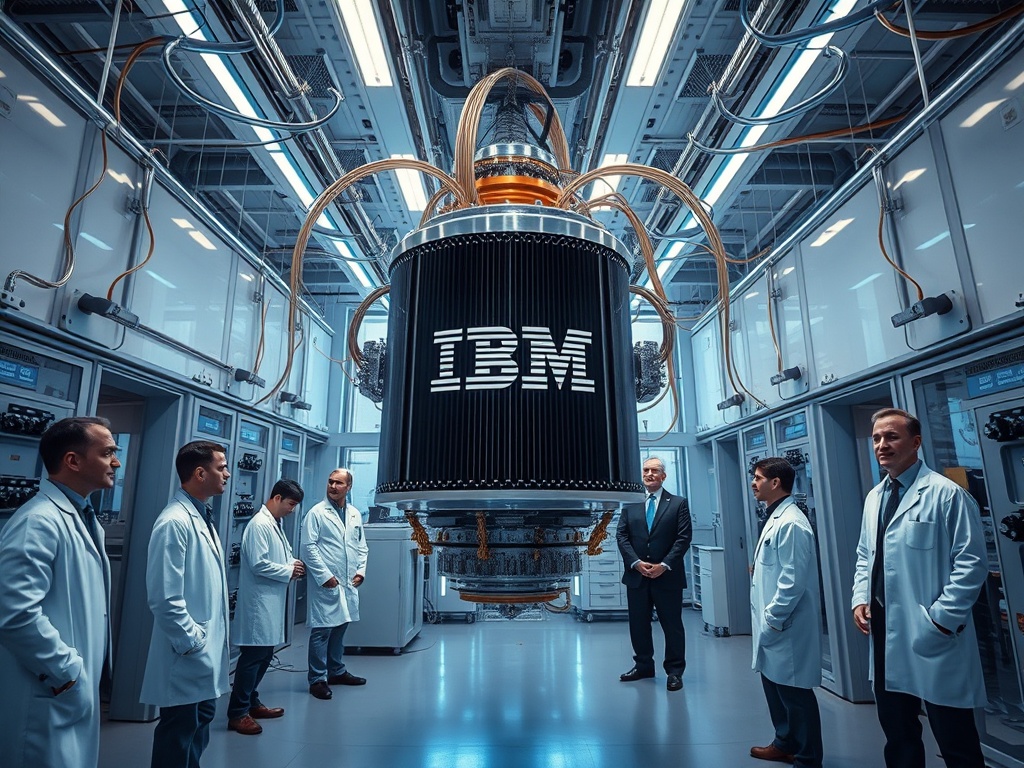

The experiment used an IQM quantum computer connected to the Leibniz Supercomputing Centre in Germany. The architecture is hybrid by design: the quantum computer intervenes just once to extract invariant statistical properties of the system — patterns that persist over time even when the system is chaotic — and then training takes place on conventional classical infrastructure. It is not a total replacement of classical hardware. It is a surgical intervention at the precise point where classical computing is most inefficient.

That is not a minor detail. It is the architectural decision that makes this result matter beyond the laboratory.

Why memory efficiency changes the economics of the problem

When Professor Peter Coveney, the study's senior author, mentions applications in climate prediction, wind farm design, and blood flow simulation, he is not speculating: he is describing industries where the computational cost of fluid dynamics simulations is an operational bottleneck with a well-known price tag. National meteorological centres spend hundreds of millions of dollars annually on supercomputing infrastructure. Pharmaceutical companies devote a significant fraction of their R&D budgets to molecular simulations that depend on approximations because exact computation is simply not viable.

A reduction of hundreds of times in memory usage is not an incremental improvement. It means that certain problems that today require a top-tier supercomputer could be run on mid-range infrastructure. That shifts the point of access to the technology downward along the chain, and that shift has direct distributional consequences.

The strategic question is not whether the method works — the peer-reviewed paper supports it — but who captures the efficiency gains that are generated. If IQM and supercomputing centres like Leibniz build access to this capability as a closed, premium-priced service, the cost reduction stays with the provider. If the hybrid workflow is documented, standardised, and made reproducible on accessible hardware, the benefit flows toward climate laboratories, universities, and the mid-sized SMEs in the energy sector that today cannot afford these simulations.

There is no technical answer to that dilemma. It is a business model decision that the funders — UCL, the UK's Engineering and Physical Sciences Research Council, IQM, and Leibniz — will make over the next 18 to 36 months.

The pattern the quantum market keeps repeating and its consequences

This result arrives at a moment when the narrative around quantum computing is under pressure. For years, the sector promised quantum supremacy as a singular and definitive event. What is emerging is more nuanced and, from the standpoint of applied value, considerably more interesting: specific advantages, bounded to concrete tasks, integrated with existing classical infrastructure.

Google Quantum AI reported in October 2025 a 13,000-fold speedup over the Frontier supercomputer in physics simulations using its 65-qubit processor. A Chinese team from the University of Science and Technology of China published in March 2026 a nine-quantum-spin system that replicates the performance of a classical network of 10,000 nodes in weather forecasting. The UCL result adds to that pattern: demonstrable advantages, not in abstract benchmarks, but in problems with direct economic value.

The structural risk of this pattern is well known in the enterprise software industry. When a capability moves from being experimental to being demonstrable, the market faces a bifurcation: providers that control access can extract positional rents, or they can build on open standards that allow for mass adoption. The first option maximises short-term revenue; the second builds a market large enough for all actors in the ecosystem to gain more in absolute terms.

The track record of high-performance scientific software suggests that open models — or semi-open models with commercial support — tend to capture more total market share than closed ones. Hybrid quantum computing has no structural reason to be the exception, but there are equally no guarantees that the main players will make that choice.

The value that accumulates where it is least talked about

The study's lead author, Maida Wang, described the result as a demonstration of "practical quantum advantage." The distinction between "practical" and "theoretical" is precisely what determines whether this work generates economic value or remains an academic milestone. Practical means that the workflow is reproducible on existing hardware, that operational costs are manageable, and that the result scales to real data — not just to laboratory simulations.

The UCL team explicitly acknowledges that the current results are validated on simulation data, and that the extension to real climate or turbulence data is part of the pending work ahead. That gap between simulated validation and field validation is where the risk of adoption is concentrated. It is not an insurmountable technical problem, but it is the point where many computational advances have lost momentum.

What makes this case different is the architecture of funding and collaboration. IQM has a direct incentive for quantum hardware to demonstrate applied value to institutional clients. Leibniz has an incentive to position itself as a hybrid computing node for European research. UCL has both academic and technology transfer incentives. Those three sets of incentives are aligned in the direction of bringing the result to field validation, which is not the usual situation in fundamental quantum research.