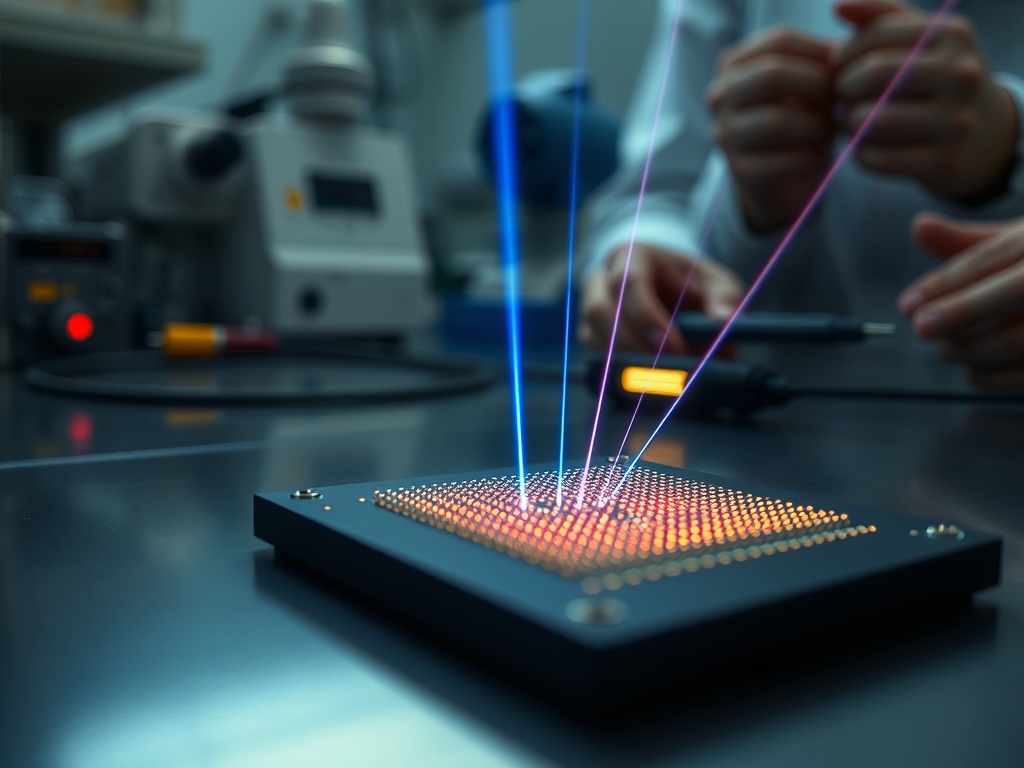

MIT has recently introduced a missing piece in the conversation about quantum scalability: a photonic chip that fires thousands of individually controllable laser beams into free space. This detail is not just aesthetic; it is operational. In many approaches to quantum computing, light is not an accessory; it is the control mechanism. If one wants to govern large quantities of qubits, the issue shifts from "having a laser" to orchestrating thousands of beams with repeatable precision, without the system ballooning into a room-sized laboratory.

The analogy used by researcher Henry Wen helps illustrate the scale: it is like "firing a T-shirt cannon" into a crowd in a stadium, but with selective aim and simultaneous action. This leap from volumetric optics to a dense chip emission platform also opens up a second, equally crucial front: MIT reports, in a parallel line, a chip with nanometer-scale antennas and waveguides that allows trapped ions to be cooled to temperatures almost ten times below the standard Doppler limit, with cooling ten times faster according to available coverage.

For an executive, the correct takeaway is not "how elegant is the photonics?" but rather what new type of infrastructure becomes enabled when optical control is compacted and manufactured as a component. The quantum market has been rife with promises; what is starting to appear here is a concrete pathway to turn those promises into industrial architecture.

From Lab to Factory: The Critical Step is Controllable Density

The technical milestone, as described, lies in the ability to interface two worlds that typically collide: the chip-based photonic world, where light travels along guides as though they were "cables," and the free-space world, where the beam propagates and must be aimed at a physical target. The lab platform of Englund at MIT incorporates tiny structures that curve upward from the chip's surface, enabling the launch and direction of light outside the plane of the chip. The stated result is an array with thousands of laser beams, each individually controllable, operating at a physical limit of "pixel" size.

This phrase about the "physical limit" is more significant than it appears. In computing and communications, economies of scale accelerate when a parameter becomes dense and repeatable: transistors per area, channels per fiber, cells per battery. In quantum control, that density rarely exists because traditional optics introduces friction: alignment, vibration, thermal drift, maintenance, and a heavy dependence on highly specialized personnel to sustain operation.

Meanwhile, the ion cooling work integrates polarization-diverse antennas and curved notches that generate a rotating vortex of light, maximizing light delivery to the ion and stabilizing the optical routing without bulky external lasers. From a product standpoint, what is being purchased here is operational stability. Less external optics means less vibration, which translates to fewer errors in quantum systems. There are no cost figures from the sources, but the mechanism is clear: compactness and reducing sensitivity to physical conditions that currently inflate the cost of operating prototypes.

The point that many leadership teams underestimate is that “scalability” does not merely mean more qubits; it means more qubits with less human intervention per unit of capacity. When thousands of beams turn into an "optical engine" on silicon, a door opens to industrialization while simultaneously risking industrial failure if the organization does not know how to manage the transition.

The Economic Unit Changes When Optical Control Becomes a Component

If a chip can, in a single piece, emit and control thousands of beams outward, the marginal cost of adding control channels begins to resemble that of semiconductors rather than lab optics. It is a change in the economic unit: spending shifts from manual integration and calibration toward manufacturing, packaging, testing, and batch performance.

This mutation has two business implications.

First, the map of suppliers and internal capabilities gets reconfigured. A company aiming to build on this type of platform becomes less dependent on laboratory “wizards” and more reliant on manufacturing engineering, metrology, quality control, and supply chain. The risk is no longer solely that the experiment might not work; it is that production performance becomes unpredictable, or the optical-mechanical packaging consumes any integration gains.

Second, adjacent applications arise, which the report mentions as plausible, such as lidar, high-speed 3D printing through rapid curing by beams, and high-resolution displays. There is no need to promise timelines that are not in the sources. What needs to be acknowledged is the pattern: when a light control technology scales in channels and controllability, its destiny is not confined to a single industry. When a technology becomes multi-industry, competition becomes asymmetric; it involves competing against firms with production muscle, certifications, and access to end markets, not just laboratories.

From a finance perspective, the sober angle is this: the advantage lies not just in intellectual property, but in the capacity to take a delicate artifact to a production line with repeatable specifications. Many companies fail here for a social reason, not a technical one: their collaboration networks are too closed, and their decisions are too centralized.

Social Capital Decides Whether This Scales Beyond the Paper

MIT frames the advancement within the Quantum Moonshot Program, in collaboration with MIT, the University of Colorado at Boulder, MITRE Corporation, and Sandia National Laboratories. This list matters as it reveals an uncomfortable truth about deep technology: when the problem is complex, execution depends on horizontal networks that connect research, applied engineering, and institutional needs. Additionally, it mentions a focus on diamond-based qubits controlled by lasers.

My perspective, considering diversity, equity, and social capital, is pragmatic: this sort of platform cannot be achieved solely through budget; it is earned through collaborative architecture. If power remains with a homogeneous “inner circle,” the organization tends to optimize for what it understands: academic metrics, internal milestones, or integration with the stack it already dominates. This creates blind spots.

Examples of typical blind spots during transitions like this, without attributing them to anyone in particular since sources do not describe internal governance:

- Who defines the success criteria. A team may declare victory for “thousands of beams,” while operations needs tolerances, maintainability, and testing protocols. If operations enters late, the costs will surface later, and they could be significant.

- Who operates the system when it leaves the lab. The transition to clients or deployments requires profiles typically outside the research circle: technicians, production engineers, laser safety specialists, compliance officers. If these profiles do not have a voice early on, the solution is designed for the wrong environment.

- Who benefits from the learning. In high-barrier technologies, learning becomes an asset. If the organization lacks mechanisms for "firstly giving" and sharing lessons with partners and peripheral teams, knowledge becomes encapsulated, politicized, and hindered.

Diversity here is not just a slogan; it is a risk mitigation strategy. Teams with diverse paths detect different faults: one sees manufacturing performance, another sees calibration protocols, another sees field safety, and another sees maintenance costs. When all are alike, they share the same mental map and confuse consensus with certainty.

Disruption Comes Not from Physics but from Organizational Design

The chip that launches thousands of beams into free space and the chip that cools ions with integrated antennas point toward a common destination: turning quantum control into compact infrastructure. If that destination materializes, the field will no longer reward the one with the best artisanal setup and will start rewarding the one who governs a chain of decisions better: design, manufacturing, testing, packaging, operation, reliability.

This shift also reorders power. Leadership that only understands the scientific narrative may underestimate human bottlenecks: recruitment, training, internal standards, incentives between research and engineering, and collaborative agreements that do not break at the first disagreement over timelines.

There is no need to romanticize it. The sources do not provide commercialization timelines or savings figures. Therefore, the responsible executive posture is to treat it as what it is: a technical enabler with broad potential and engineering uncertainty. The winning strategy is to build optionality: pilots, co-development agreements, and above all, an organization capable of absorbing learning without burning it in internal politics.

The mandate for C-Level is direct: at the next board meeting, look at your inner circle and recognize that if everyone is too similar, they inevitably share the same blind spots, making them imminent victims of disruption.