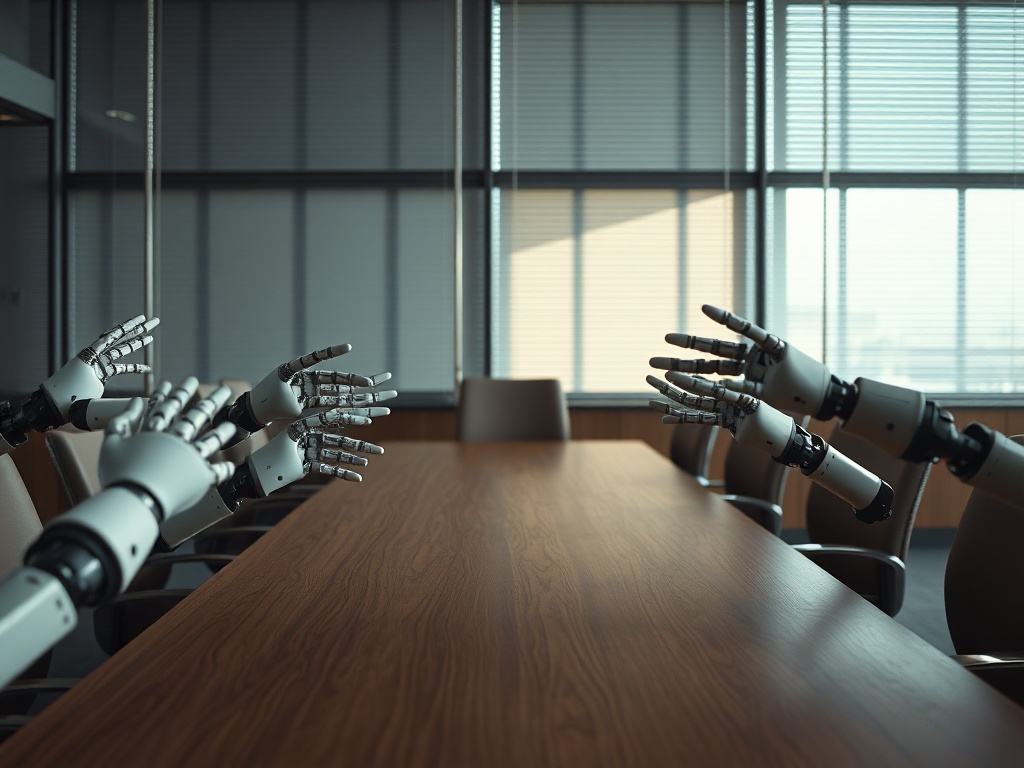

A Room Full of Power and Empty of Diversity

This week, the U.S. Secretary of the Treasury convened top executives from American banking for an emergency meeting in Washington. The reason: the cyber risks posed by Claude Mythos, the new artificial intelligence model developed by Anthropic, which the company itself has classified as bearing "unprecedented cybersecurity risks." The model is notable for its ability to identify vulnerabilities in software at a speed that surpasses traditional repair cycles, which range from 30 to 90 days. The Federal Reserve chairman was also present, alongside the CEOs of the country's largest banks.

The imagery is powerful. And at the same time, symptomatic.

I do not have access to the list of attendees, as it was not disclosed. However, the pattern of these emergency calls within the U.S. financial system has shown decades of consistency: those who are called are already within the circle, speaking the same regulatory language, trained in the same business schools, and having climbed the same structures. A crisis room is built with the same individuals who, collectively, did not foresee the crisis.

This is not a moral judgment. It is a diagnosis of organizational architecture.

Management teams that share the same origin, trajectory, and frame of reference tend to share their blind spots as well. And blind spots are not detected from within; they are detected from the periphery. In AI cybersecurity, the periphery includes independent researchers, developer communities that were already discussing Mythos's capabilities in technical forums like Hacker News long before the Treasury summoned anyone, and experts in offensive security operating outside traditional corporate perimeters. That distributed intelligence was not sitting at that table.

The Risk Anthropic Acknowledged But Banks Failed to Model

Anthropic launched Claude Mythos fully aware of its capabilities. The company itself warned about its vulnerability identification skills. This deserves analytical attention because it implies that the risk did not emerge out of nowhere; it was documented by its own creator prior to the model’s launch. What failed was not the available information, but the mechanism for processing it and integrating it into the banks' risk models.

U.S. banks spend considerable sums on cybersecurity. JPMorgan Chase alone reports an annual investment of $15 billion in its 2025 annual report. The financial system as a whole faces estimated losses from cyber incidents of $12.5 billion annually, according to IBM data for 2025. Yet, the institutional response to an AI model with automated vulnerability scanning capabilities was reactive: launch first, sound the alarm next, then hold the meeting.

This is the pattern I want to dissect. Not the technology itself, but the mechanics of who has access to early signals and who processes them.

When the team designing risk protocols shares a singular view of the technological world—constructed from the center of the regulated financial system—its ability to read signals emerging from the software development margins is structurally limited. The forums where Mythos’s capabilities in agentic tasks were discussed are not marginal spaces: they are where technical opinion forms before it reaches the analysts’ reports. Incorporating those voices is not a gesture of openness; it is an informational advantage.

Moreover, the model's own design raises a question that no regulation has yet answered accurately: who was at the table when the decision to launch Mythos with these capabilities was made, and what perspectives on systemic impact in critical infrastructure were represented in that room? Anthropic has a track record of a safety focus that distinguishes it from other sector players, but the fact that the model is categorized as bearing unprecedented risk by its own creators and is still released into the market suggests that internal governance mechanisms for assessing externalities in critical sectors like banking are still maturing.

When the Trust Network Doesn’t React in Time

What this emergency meeting also reveals is the fragility of the networks the financial system has built to manage cutting-edge technological risks. A robust social capital network in cybersecurity does not activate after a potential threat is launched; it operates in real-time, as it is built on genuine exchange relationships with technical communities, independent security researchers, and threat intelligence teams operating outside traditional corporate structures.

The Treasury's call was, in terms of network architecture, a centralized response to a problem already visible in decentralized nodes. The speed at which Claude Mythos can expose vulnerabilities—according to U.S. officials, faster than human teams can patch them—is not a technical surprise to those monitoring benchmarks of agentic models. It was a logical projection of the trajectory of capabilities that the developers themselves have been publishing in comparative assessments.

Banks that emerge best from the new cycle of AI-based threats will not be those with the largest cybersecurity budget. They will be the ones that have built early intelligence channels with the communities where that knowledge is generated before it becomes official. This requires a type of institutional openness that traditional financial hierarchies do not naturally incentivize: it necessitates incorporating unconventional technical profiles into roles with real access to decision-making, not just as external consultants called in after the problem exists.

The financial sector holds $28 trillion in U.S. deposits under custodianship, according to FDIC data for the first quarter of 2026. A single systemic incident could generate modeled contagion losses between $100 billion and $500 billion, based on the Federal Reserve's stress tests for 2025. Given that exposure, the cost of not having the right intelligence in the room is not merely a reputational cost. It is a capital cost.

Diverse Thinking as an Informational Advantage

Corporate leadership arriving at this realization likely already has a cybersecurity team. What it rarely possesses is a formal mechanism for signals circulating in the technical periphery to reach the level where risk decisions are made before they escalate into crises.

The meeting at the Treasury is a symptom of that deficit. Not of institutional bad faith, but of a network architecture designed to manage the world we already know, not the one that is emerging. Claude Mythos is not the last dual-capability tool that will press the limits of financial infrastructure. The cycle will repeat, and the speed between launch and operational threat will compress.

The structural response is not to hire more cybersecurity analysts of the same profile. It is to rebuild intelligence networks from different principles: incorporate heterodox thinking into tech risk committees, establish permanent channels with independent research communities, and design escalation mechanisms that do not rely on the Treasury calling a meeting for information to reach those who can act on it.

Boards today filled with executives whose trajectories are almost identical are betting that the next risk will resemble previous ones. That bet carries a price that is already being quoted in the market: cybersecurity sector stocks rose by 8% on April 10. Someone is already profiting from the blind spot that the Treasury room was slow to perceive.

Observe your next tech risk committee with the same coldness an auditor reviews a balance sheet: if everyone seated arrived at the same point via the same path, the committee lacks signal diversity, possesses consensus disguised as analysis, and that makes it the first asset an emerging threat will exploit.